But now we immediately face daunting challenges about the meaning of these terms. What are the limits of a “medical decision?” What are the limits of the public health clause? Can the regulation of the practice of medicine impinge on medical decisions if, for example, a procedure is regulated out of availability? Does this create an immediate tension between the preamble and the restrictive clauses?

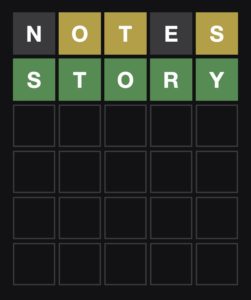

Let’s take a version of Putnam’s concerns about meaning. What is a neutrino? Many people would simply shrug and admit that they don’t know. Some would recall something like a particle that can pass through stuff. A few of these who have some physics or are widely read might say that they are very light particles that emerge from neutron decay and are needed to balance the nuclear decay equation. This last series of images might include thoughts about giant underground detector baths of water or mineral oil or something. In general, though, we can conclude that defining something that is physical, measurable, but incomplete is a daunting task.

Legal theories have this kind of amorphous semantics, especially with regard to concepts like “liberty.” We certainly have some indelible images like “your liberty ends at my nose” but that doesn’t create a very effective template for legal decision trees. Does a stand-your-ground law preserve my liberty to self-defense or is it an excessive application of force when the two parties’ joint right to life is better preserved by a duty to retreat? Dobbs v. Jackson Women’s Health Organization lays out the problem of defining liberty:

… Read the rest“Liberty” is a capacious term.