I was reading Muriel Rukeyser‘s poetry and marveling at some of the lucid yet novel constructions she employs. I was trying to avoid the grueling work of comparing and contrasting Biden’s speech on the anniversary of January 6th, 2021 with the responses from various Republican defenders of Trump. Both pulled into focus the effect of semantic and pragmatic framing as part of the poetic and political processes, respectively. Sorry, Muriel, I just compared your work to the slow boil of democracy.

I was reading Muriel Rukeyser‘s poetry and marveling at some of the lucid yet novel constructions she employs. I was trying to avoid the grueling work of comparing and contrasting Biden’s speech on the anniversary of January 6th, 2021 with the responses from various Republican defenders of Trump. Both pulled into focus the effect of semantic and pragmatic framing as part of the poetic and political processes, respectively. Sorry, Muriel, I just compared your work to the slow boil of democracy.

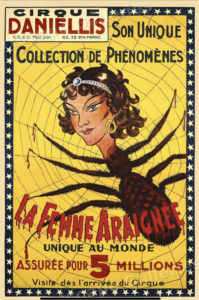

Reaching in interlaced gods, animals, and men.

There is no background. The figures hold their peace

In a web of movement. There is no frustration,

Every gesture is taken, everything yields connections.

There is a theory about how language works that I’ve discussed here before. In this theory, from Donald Davidson primarily, the meaning of words and phrases are tied directly to a shared interrogation of what each person is trying to convey. Imagine a child observing a dog and a parent says “dog” and is fairly consistent with that usage across several different breeds that are presented to the child. The child may overuse the word, calling a cat a dog at some point, at which point the parent corrects the child with “cat” and the child proceeds along through this interrogatory process, triangulating in on the meaning of dog versus cat. Triangulation is Davidson’s term, reflecting three parties: two people discussing a thing or idea. In the case of human children, we also know that there are some innate preferences the child will apply during the triangulation process, like preferring “whole object” semantics to atomized ones, and assuming different words mean different things even when applied to the same object: so “canine” and “dog” must refer to the same object in slightly different ways since they are differing words, and indeed they do: dog IS-A canine but not vice-versa.… Read the rest