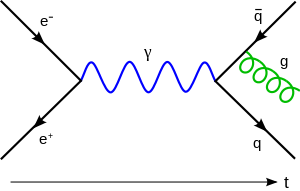

The simulation hypothesis is perhaps a bit more interesting than how to add clusters of neural network nodes to do a simple reference resolution task, but it is also less testable. This is the nature of big questions since they would otherwise have been resolved by now. Nevertheless, some theory and experimental analysis has been undertaken for the question of whether or not we are living in a simulation, all based on an assumption that the strangeness of quantum and relativistic realities might be a result of limited computing power in the grand simulator machine. For instance, in a virtual reality game, only the walls that you, as a player, can see need to be calculated and rendered. The other walls that are out of sight exist only as a virtual map in the computer’s memory or persisted to longer-term storage. Likewise, the behavior of virtual microscopic phenomena need not be calculated insofar as the macroscopic results can be rendered, like the fire patterns in a virtual torch.

The simulation hypothesis is perhaps a bit more interesting than how to add clusters of neural network nodes to do a simple reference resolution task, but it is also less testable. This is the nature of big questions since they would otherwise have been resolved by now. Nevertheless, some theory and experimental analysis has been undertaken for the question of whether or not we are living in a simulation, all based on an assumption that the strangeness of quantum and relativistic realities might be a result of limited computing power in the grand simulator machine. For instance, in a virtual reality game, only the walls that you, as a player, can see need to be calculated and rendered. The other walls that are out of sight exist only as a virtual map in the computer’s memory or persisted to longer-term storage. Likewise, the behavior of virtual microscopic phenomena need not be calculated insofar as the macroscopic results can be rendered, like the fire patterns in a virtual torch.

So one way of explaining physics conundrums like delayed choice quantum erasers, Bell’s inequality, or ER = EPR might be to claim that these sorts of phenomena are the results of a low-fidelity simulation necessitated by the limits of the simulator computer. I think the likelihood that this is true is low, however, because we can imagine that there exists an infinitely large cosmos that merely includes our universe simulation as a mote within it. Low-fidelity simulation constraints might give experimental guidance, but the results could also be supported by just living with the indeterminacy and non-locality as fundamental features of our universe.

It’s worth considering, however, what we should think about the nature of the simulator given this potentially devious (and poorly coded) little Matrix that we find ourselves trapped in?… Read the rest